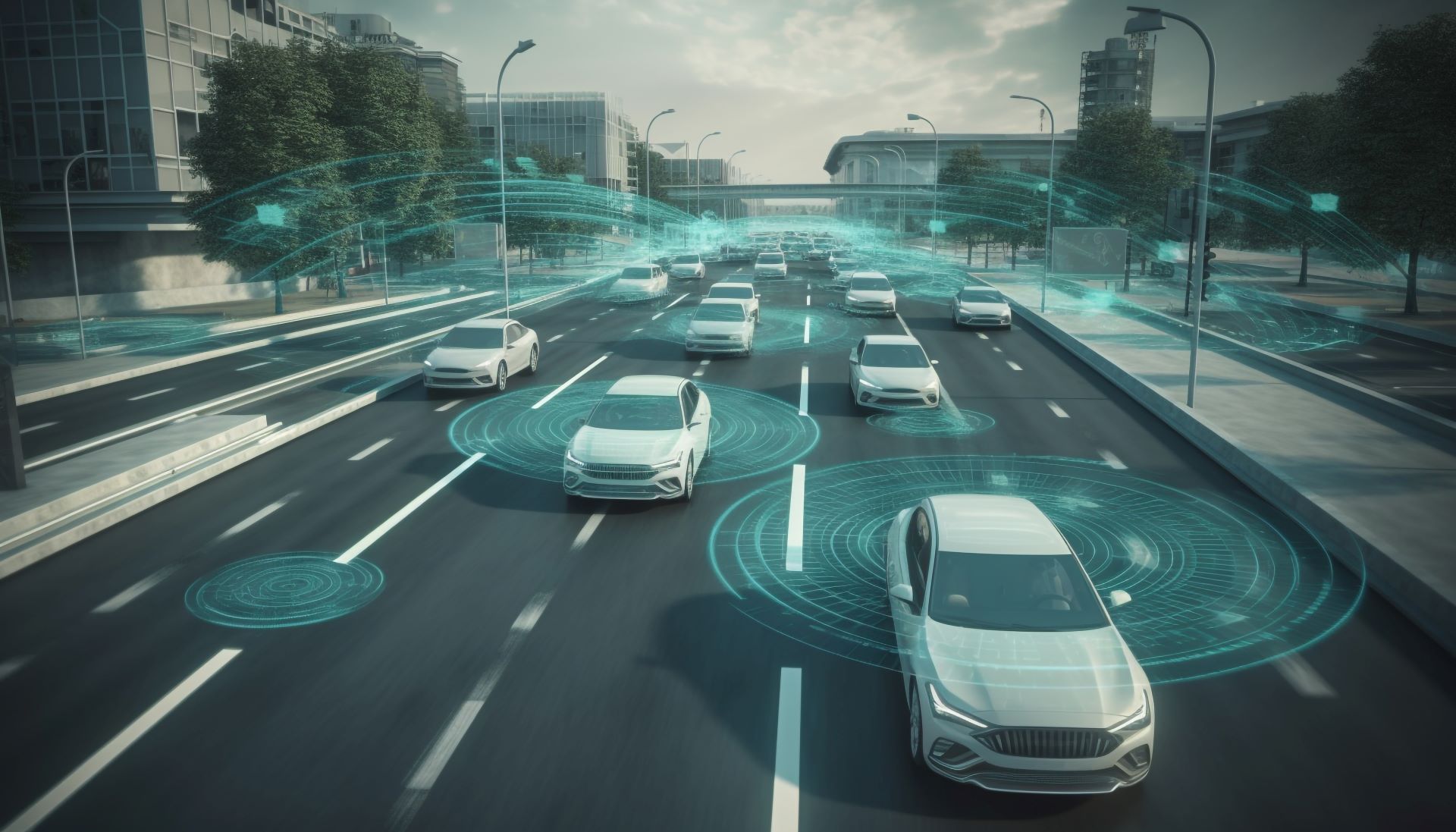

Advanced driver assistance systems are entering a transformative phase in 2026, with manufacturers moving decisively beyond basic safety aids toward deeply integrated, software-defined driving support. The next wave of ADAS is characterised by consolidation, artificial intelligence and increasingly sophisticated sensor fusion, all of which carry major implications for repairers, insurers and fleet operators.

Next-generation surround ADAS

From 2026, next-generation surround ADAS platforms such as those built on Mobileye’s EyeQ6H are entering mass-market vehicles. These unified systems merge lane centring, adaptive cruise control and traffic jam assist into a single architecture capable of extended hands-free highway driving.

By combining inputs from cameras, radar and high-definition maps, the vehicle can manage both longitudinal and lateral control with greater precision. Crucially, over-the-air updates will continuously expand functionality, meaning workshops will face more software-driven calibrations alongside traditional mechanical work. For insurers, shifting collision patterns will require updated risk and pricing models.

“Eyes-off” assist approaches Level 3

Several OEMs are preparing “eyes-off” hands-free capability for specific highway operational design domains. These Level 2+ and near-Level 3 systems allow drivers to briefly remove visual attention while the vehicle controls the drive under tightly defined conditions.

Achieving this requires powerful autonomy chips running deep learning models in real time, supported by multi-layer sensor redundancy that often combines radar, cameras and LiDAR. Regulatory frameworks are still evolving, but limited regional rollouts are expected in 2026. Repairers will need new validation procedures to confirm proper sensor synergy after any repair.

AI-driven predictive hazard detection

ADAS is shifting from reactive to predictive safety. New AI-enhanced stacks use machine learning to interpret sensor data and anticipate hazards such as pedestrian intent or sudden cut-ins before traditional thresholds are crossed.

This predictive situational awareness improves collision avoidance in dense urban traffic and brings production vehicles closer to autonomous-style perception. The trade-off is a surge in event logging and data complexity, requiring insurers and repair networks to develop deeper diagnostic capabilities.

Integrated LiDAR, radar and vision

Sensor fusion is expanding rapidly in 2026 vehicles. Increasing numbers of models now combine LiDAR with radar and camera suites to improve three-dimensional perception and long-range detection, particularly in poor visibility.

As component costs fall, this once-premium configuration is moving into mainstream EVs and crossovers. The downside is greater post-repair complexity. Multiple overlapping sensors mean more calibration targets, tighter alignment tolerances and more extensive verification requirements.

Built-in dashcams and safety analytics

Another important shift is the integration of factory-fitted dashcams and event data recorders within ADAS platforms. These systems continuously monitor driving and automatically store footage of collisions, near misses and harsh braking events.

For insurers, this data supports more accurate claims validation and crash reconstruction. For repairers, event records increasingly form part of post-repair verification, confirming that ADAS functions operate correctly.

ADAS in 2026 is becoming more unified, predictive and data-rich. While the safety upside is significant, the technology also raises the bar for calibration accuracy, diagnostic depth and documented proof of system performance across the automotive ecosystem.

Staff Writer

Reporting from the front lines of the collision repair industry, delivering expert analysis and the technical updates that drive the African automotive sector forward.

More From News

Rising Distraction, Sharper Cost Pressures and Injury Claims Reshape Insurance Market

US motor insurance is shifting as distracted driving, rising claims costs and price sensitivity reshape risk and underwriting in 2026. Master

Training Tomorrow’s Technicians

Hyundai and Motus launch a training academy in Pinetown to develop 2,000+ students annually in EV, diagnostics and advanced automotive skills in South Africa

Glasurit Backs Global Skills Challenge Ahead of WorldSkills 2026

Glasurit becomes official paint provider for WorldSkills 2026 in Wollongong, supporting automotive refinish training and skills development.

The ABC of Correct Filler Use in Collision Repair

Correct body filler use relies on OEM procedures, proper surface preparation and controlled application to prevent corrosion, cracking and failure.

Plasnomic Sets New Benchmark in Polypropylene Bumper Repair Testing

Plasnomic completes phase one of global polypropylene bumper weld benchmarking, advancing evidence-led OEM repair standards for collision industry.

Volvo Tightens Rules on Windscreen Replacements

Volvo says windscreens must be genuine OEM glass fitted at approved centres, as modern glass supports safety systems and requires calibration.